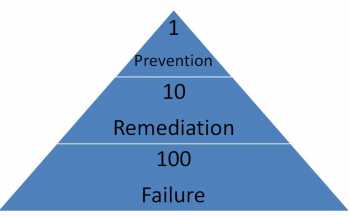

The 1-10-100 rule is a groundbreaking quality management concept developed by G. Loabovitz and Y. Chang. This rule serves as a valuable tool to quantify the concealed expenses associated with subpar quality.

Navigate through the intricacies of data management confidently by prioritizing data quality assurance for sustainable business growth.

Although the exact figures may vary, the principle behind the rule remains applicable when examining data quality. So, let’s delve into how this rule operates and why it is of utmost importance

The hidden costs of poor quality

The 1-10-100 rule brings attention to the hidden costs linked to waste arising from inadequate data quality. It unveils the staggering truth that rectifying flawed data can be up to ten times more expensive than preventing errors from infiltrating the system in the first place. The ramifications of poor data quality can be significant and far-reaching.

Dive deep into the root cause of poor data quality and discover actionable solutions to enhance data integrity.

Learn effective strategies on how to unlock the potential of high-quality data for enhanced business insights and growth.

Remediation costs more than prevention

The principle suggests that the cost of fixing bad data is an order of magnitude greater than the cost of stopping it from entering the system.

Consider the evident costs incurred when we establish back-office teams solely responsible for identifying and rectifying errors that originate from the front office. Essentially, we are forced to invest additional resources to capture data twice. However, the expenses involved in remediation pale in comparison to the repercussions of retaining erroneous data.

Failure costs more than remediation

The impact of low-quality data on our operational efficiency cannot be underestimated.

An incorrect invoice amount might result in non-payment, delivering to the wrong address incurs the expense of redelivery, and providing an inaccurate risk assessment increases the likelihood of bad debts.

Therefore, our primary focus should be on preventive measures rather than reactive remediation.

Our focus should be on prevention

Regrettably, numerous data quality initiatives tend to concentrate on rectifying issues after they have transpired.

This approach overlooks the immense value of proactively preventing poor data from infiltrating our systems. It begs the question: What steps is your company taking to halt the entry of flawed data into its operations?

At the core of successful data management lies the recognition that data can be both an asset and a liability. Investing in robust data quality practices is crucial for navigating the ever-evolving business landscape. It ensures that organizations can leverage the true value of their enterprise information assets while avoiding the detrimental consequences of compromised data.

Join us on a journey to unlock the full potential of your data quality. Together, we can steer your company towards excellence in a rapidly changing world.

Leave a comment